A second study from the team suggests that a drug used to treat paracetamol overdose may be able to help individuals who want to break their addiction and stop their damaging cocaine seeking habits.

Although both studies were carried out in rats, the researchers believe the findings will be relevant to humans.

Cocaine is a stimulant drug that can lead to addiction when taken repeatedly. Quitting can be extremely difficult for some people: around four in ten individuals who relapse report having experienced a craving for the drug – however, this means that six out of ten people have relapsed for reasons other than ‘needing’ the drug.

“Most people who use cocaine do so initially in search of a hedonic ‘high’,” explains Dr David Belin from the Department of Pharmacology at the University of Cambridge. “In some individuals, though, frequent use leads to addiction, where use of the drug is no longer voluntary, but ultimately becomes a compulsion. We wanted to understand why this should be the case.”

Drug-taking causes a release in the brain of the chemical dopamine, which helps provide the ‘high’ experienced by the user. Initially the drug taking is volitional – in other words, it is the individual’s choice to take the drug – but over time, this becomes habitual, beyond their control.

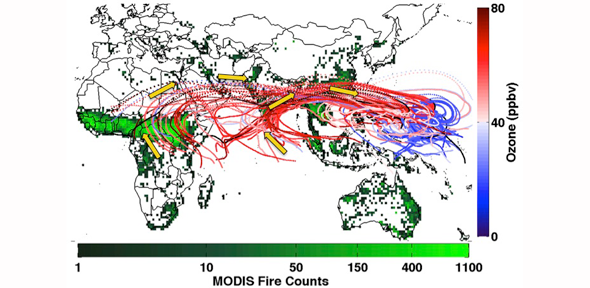

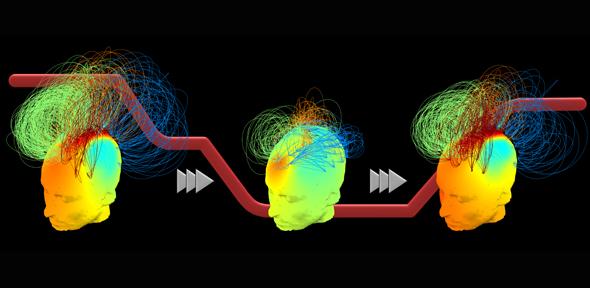

Previous research by Professor Barry Everitt from the Department of Psychology at Cambridge showed that when rats were allowed to self-administer cocaine, dopamine-related activity occurred initially in an area of the brain known as the nucleus accumbens, which plays a significant role driving ‘goal-directed’ behaviour, as the rats sought out the drug. However, if the rats were given cocaine over an extended period, this activity transferred to the dorsolateral striatum, which plays an important role in habitual behaviour, suggesting that the rats were no longer in control, but rather were responding automatically, having developed a drug-taking habit.

The brain mechanisms underlying the balance between goal-directed and habitual behaviour involves the prefrontal cortex, the brain region that orchestrates our behaviour. It was previously thought that this region was overwhelmed by stimuli associated with the drugs, or with the craving experienced during withdrawal; however, this does not easily explain why the majority of individuals relapsing to drug use did not experience any craving.

Chronic exposure to drugs alters the prefrontal cortex, but it also alters an area of the brain called the basolateral amygdala, which is associated with the link between a stimulus and an emotion. The basolateral amygdala stores the pleasurable memories associated with cocaine, but the pre-frontal cortex manipulates this information, helping an individual to weigh up whether or not to take the drug: if an addicted individual takes the drug, this activates mechanisms in the dorsal striatum.

However, in a study published today in the journal Nature Communications, Dr Belin and Professor Everitt studied the brains of rats addicted to cocaine through self-administration of the drug and identified a previously unknown pathway within the brain that links impulse with habits.

The pathway links the basolateral amygdala indirectly with the dorsolateral striatum, circumventing the prefrontal cortex. This means that an addicted individual would not necessarily be aware of their desire to take the drug.

“We’ve always assumed that addiction occurs through a failure or our self-control, but now we know this is not necessarily the case,” explains Dr Belin. “We’ve found a back door directly to habitual behaviour.

“Drug addiction is mainly viewed as a psychiatric disorder, with treatments such as cognitive behavioural therapy focused on restoring the ability of the prefrontal cortex to control the otherwise maladaptive drug use. But we’ve shown that the prefrontal cortex is not always aware of what is happening, suggesting these treatments may not always be effective.”

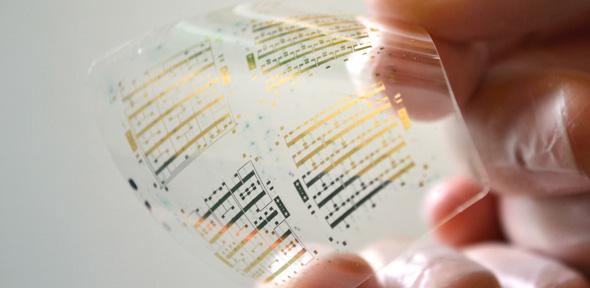

In a second study, published in the journal Biological Psychiatry, Dr Belin and colleagues showed that a drug used to treat paracetamol overdose may be able to help individuals addicted to cocaine overcome their addiction – provided the individual wants to quit.

The drug, N-acetylcysteine, had previously been shown in rat studies to prevent relapse. However, the drug later failed human clinical trials, though analysis suggested that while it did not lead addicted individuals to stop using cocaine, amongst those who were trying to abstain, it helped them refrain from taking the drug.

Dr Belin and colleagues used an experiment in which rats compulsively self-administered cocaine. They found that rats given N-acetylcysteine lost the motivation to self-administer cocaine more quickly than rats given a placebo. In fact, when they had stopped working for cocaine, they tended to relapse at a lower rate. N-acetylcysteine also increased the activity in the brain of a particular gene associated with plasticity – the ability of the brain to adapt and learn new skills.

“A hallmark of addiction is that the user continues to take the drug even in the face of negative consequences – such as on their health, their family and friends, their job, and so on,” says co-author Mickael Puaud from the Department of Pharmacology of the University of Cambridge. “Our study suggests that N-acetylcysteine, a drug that we know is well tolerated and safe, may help individuals who want to quit to do so.”

Reference

Murray, JE et al. Basolateral and central amygdala differentially recruit and maintain dorsolateral striatum-dependent cocaine-seeking habits. Nature Comms; 16 December 2015

Ducret, E et al. N-acetylcysteine facilitates self-imposed abstinence after escalation of cocaine intake. Biological Psychiatry; 7 Oct 2015

Individuals addicted to cocaine may have difficulty in controlling their addiction because of a previously-unknown ‘back door’ into the brain, circumventing their self-control, suggests a new study led by the University of Cambridge.

The text in this work is licensed under a Creative Commons Attribution 4.0 International License. For image use please see separate credits above.