Published today in the journal Nature Structural and Molecular Biology, the discovery suggests that many more DNA modifications than previously thought may exist in human, mouse and other vertebrates.

DNA is made up of four ‘bases’: molecules known as adenine, cytosine, guanine and thymine – the A, C, G and T letters. Strings of these letters form genes, which provide the code for essential proteins, and other regions of DNA, some of which can regulate these genes.

Epigenetics (epi - the Greek prefix meaning ‘on top of’) is the study of how genes are switched on or off. It is thought to be one explanation for how our environment and behaviour, such as our diet or smoking habit, can affect our DNA and how these changes may even be passed down to our children and grandchildren.

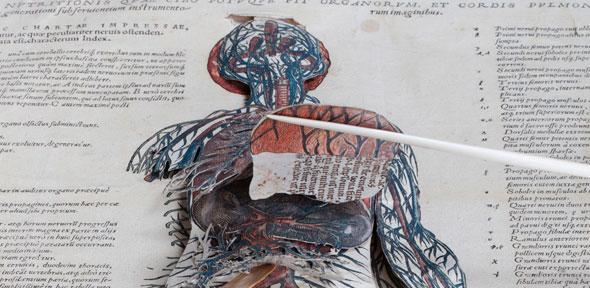

Epigenetics has so far focused mainly on studying proteins called histones that bind to DNA. Such histones can be modified, which can result in genes being switched on or of. In addition to histone modifications, genes are also known to be regulated by a form of epigenetic modification that directly affects one base of the DNA, namely the base C. More than 60 years ago, scientists discovered that C can be modified directly through a process known as methylation, whereby small molecules of carbon and hydrogen attach to this base and act like switches to turn genes on and off, or to ‘dim’ their activity. Around 75 million (one in ten) of the Cs in the human genome are methylated.

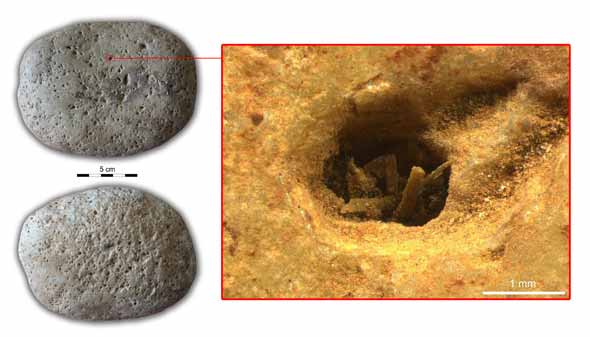

Now, researchers at the Wellcome Trust-Cancer Research UK Gurdon Institute and the Medical Research Council Cancer Unit at the University of Cambridge have identified and characterised a new form of direct modification – methylation of the base A – in several species, including frogs, mouse and humans.

Methylation of A appears to be far less common that C methylation, occurring on around 1,700 As in the genome, but is spread across the entire genome. However, it does not appear to occur on sections of our genes known as exons, which provide the code for proteins.

“These newly-discovered modifiers only seem to appear in low abundance across the genome, but that does not necessarily mean they are unimportant,” says Dr Magdalena Koziol from the Gurdon Institute. “At the moment, we don’t know exactly what they actually do, but it could be that even in small numbers they have a big impact on our DNA, gene regulation and ultimately human health.”

More than two years ago, Dr Koziol made the discovery while studying modifications of RNA. There are 66 known RNA modifications in the cells of complex organisms. Using an antibody that identifies a specific RNA modification, Dr Koziol looked to see if the analogous modification was also present on DNA, and discovered that this was indeed the case. Researchers at the MRC Cancer Unit then confirmed that this modification was to DNA, rather than from any RNA contaminating the sample.

“It’s possible that we struck lucky with this modifier,” says Dr Koziol, “but we believe it is more likely that there are many more modifications that directly regulate our DNA. This could open up the field of epigenetics.”

The research was funded by the Biotechnology and Biological Sciences Research Council, Human Frontier Science Program, Isaac Newton Trust, Wellcome Trust, Cancer Research UK and the Medical Research Council.

Reference

Koziol, MJ et al. Identification of methylated deoxyadenosines in vertebrates reveals diversity in DNA modifications. Nature Structural and Molecular Biology; 21 Dec 2015

The world of epigenetics – where molecular ‘switches’ attached to DNA turn genes on and off – has just got bigger with the discovery by a team of scientists from the University of Cambridge of a new type of epigenetic modification.

The text in this work is licensed under a Creative Commons Attribution 4.0 International License. For image use please see separate credits above.

“People had an intimate relationship with the environment they were so closely tuned to and, of course, entirely dependent on. This knowledge may have made them wary of abandoning strategies that enabled them to balance their use of resources – in a multi-spectrum exploitation of the environment.”

“People had an intimate relationship with the environment they were so closely tuned to and, of course, entirely dependent on. This knowledge may have made them wary of abandoning strategies that enabled them to balance their use of resources – in a multi-spectrum exploitation of the environment.”