Ladies and gentlemen, it is a real pleasure to be able to speak to you today, and to join with friends and colleagues in celebrating the 650th anniversary of this great university. Six years ago, in Cambridge, we celebrated our 800th year, so I understand the pride that staff and students here in Vienna feel on reaching such an impressive milestone. It is a privilege and a personal pleasure to join you at such a special time.

I am delighted to be in Vienna as the history of this great city was held in high regard throughout my childhood. Although I was born in the UK to Polish parents, a single date is inscribed in my memory – the 12th September 1683. This date marked the end of the Siege of Vienna, relieved under the leadership of the Polish King Jan Sobieski. It is when Vienna took her place at the heart of Europe, and is a cornerstone in the history of your University. At a more personal level, my Grandfather was born in the Austro-Hungarian region of Poland, and served in the Hussars during the First World War.

Like Cambridge, the University of Vienna has played a key role in academic enlightenment from the 19th century to today. Our ancient institutions share academic leadership and core values exemplified by our amazing alumni, from Newton to Hawking in Cambridge, and Landsteiner to Lorenz in Vienna. Few organisations today have both the history and the foresight to be able to look back over centuries of progress and simultaneously make bold plans for a long-term future.

Anniversaries are a time for celebrating achievements. They are a time for remembering challenges and threats that have been overcome – and for renewing the values that have sustained us. But they are also a time for looking forward; we must ask ourselves as Universities committed to a long term view: what does the future look like – in a year, in five years, yes, but most importantly in 20 years’ time? What are the challenges and opportunities we can predict and how do we remain fit for purpose to deal with the unpredictable?

And it is the question of the future of universities such as Vienna and Cambridge – and our role in creating a prosperous Europe for the 21st century – that I want to focus on today.

The health of Europe and the health of our universities are strongly connected. In fact, I firmly believe that we cannot have one without the other. A strong Europe, benefiting from economic partnership, freedom of movement, and a deep respect for the individual – while respecting national and regional cultures – creates the conditions that universities need to thrive. Given those conditions, universities will continue to do what they have been doing for centuries: contribute to society through research and learning. New medicines will be developed. Tomorrow’s leaders nurtured. Jobs created. But without the right support, and without political leaders who are willing to take a long-term view, I fear universities – and countries – will suffer.

A little under four months’ ago, His Holiness Pope Francis described Europe as “elderly and haggard”. I can understand why he chose those words. We are still grappling with the effects of the deepest financial crisis in over 80 years. That pain has led to startling inequalities in employment, health and basic services across the European Union.

Europe and innovation

But we need to be optimistic. Europe is also a continent with huge potential: a region packed with many of the world’s best minds, boldest entrepreneurs and most dedicated teachers. All over Europe, and often located close to and associated with major universities, committed men and women are building new economies based on knowledge, discovery and innovation. They must be supported for the sake of our future.

Innovation – and specifically innovation driven by academic collaboration, technology clusters and exciting relationships between universities and businesses – will play an increasingly important role in driving forward growth and prosperity. This is something the European Commission has been rightly vocal about, and through Horizon 2020, it has committed to investing 3% of the EU’s GDP in research and innovation.

I see on a daily basis what commitment to innovation can do. In Cambridge, our innovation cluster began in 1960 with the simple idea of putting “the brains of Cambridge University at the disposal of industry”. One of its leading protagonists is a son of this city – Hermann Hauser - whose outstanding contribution is recognised by the Hauser Forum: a striking building on our West Cambridge campus that has become a focal point for entrepreneurship and knowledge exchange in our region.

Today, the result is the Cambridge cluster. In a city with a population of just over 120,000, more than 1,500 technology-based firms, employing some 57,000 people and generating more than £13bn in revenue have been created. This results in a local unemployment rate of 1.4% and we do not need to always look to the USA as Cambridge is rated alongside MIT and Stanford as the top 3 world leaders in the University Innovation Ecosystem Benchmark Study 2012-14 well ahead of the other 200 universities studies. Who did this study - MIT!It is not just the Greater Cambridge Region that benefits from this. Two years ago, the global pharmaceutical firm AstraZeneca announced it would establish a new €400m R&D headquarters in the city. Without the University and its track record in world-leading science and medicine, together with the close proximity of two large hospitals and the environment of the cluster, the company could easily have gone to the US – to the detriment of the UK and European life sciences community, and of our economies.

We at Cambridge are very successful and we already demonstrate that this success can be achieved in Europe. Similar university-led innovation is evident across Europe. Vienna is known for its contribution to the life sciences cluster. Other examples range from Munich’s high-tech, medical cluster, Estonia’s health-tech cluster in Tallinn, to Nice’s technology park at Sophia Antipolis.

Investing in knowledge

This wealth of intellectual and entrepreneurial capital makes the EU, in the Commission’s own words, the “knowledge production centre of the world… accounting for almost a third of the world’s science and technology production”.

That sounds impressive, and it is. But it also has a genuine impact on the quality of people’s lives in countries all across Europe. Let’s remember that in the financial crisis of 2008, European countries that invested most heavily in research and innovation were the countries that recovered more quickly.

But standing still means falling behind. The potent, catalytic power that research and innovation play in creating prosperity for regions, countries and citizens is one of the fought-over commodities of the early 21st century. And the global competition is fierce from America, and the developed Asian economies, such as China and South Korea. Unfortunately, the indicators of power and influence in the knowledge economy do not look promising for Europe. China is investing far more in research and innovation, fast catching up with the US, and more researchers from Europe head for America than the other way round.

In a few moments, I want to make the case for a more significant and autonomous commitment to research funding – both within the EU and nationally – and to outline my own view that the EU is still the body best positioned to support universities in their role as creators of economic growth.

But before I do, let us look at some of the unique attributes of universities that make us such effective innovators and contributors to growth and social wellbeing.

Universities – four key attributes

First, we are very good at taking the long-term view. Today’s leading European universities – including Vienna – have long and rich histories suggesting that they have a resilience that can deal with uncertainty. We pre-date and have survived many economic and political upheavals. How? The value we place on autonomy. Autonomy at the level of individual researchers, who have the freedom to follow their intellectual curiosity, but also autonomy at the institutional level itself.

At Cambridge, this dates to the 16th century – the right granted to us by Queen Elizabeth 1 to govern ourselves. We prize this greatly and never take it for granted. It gives us the advantage of a strong focus, and an ability to take bold decisions that support our mission.

Let me give you an example. Two years ago, the University of Cambridge committed to the largest expansion of our campus in our 800-year history – a project that will ultimately cost us £1bn. Why? Because we are committed to ensuring that the Cambridge of 2040 carries forward our mission in a new and different yet still uncertain world. A world that we do not understand, but need to meet head on and adapt to. We as a University are concerned in overspecialisation as this restricts flexibility and who here can predict where the next major discovery such as DNA will occur. Therefore this needs new, adaptable research and teaching strategies and facilities, as well as homes for both staff and students to be found on this campus.

Second, we are focused on excellence in everything we do. At Cambridge – as is the case at nearly all British universities, this starts when we select 17- and 18-year-olds to study for their undergraduate degrees. We seek to encourage the very brightest students to apply for what are fiercely contested places. It doesn’t matter what their backgrounds are, where they go to school, whether their parents have been to university. We want the brightest students, and those with the most potential. And we work with schools in every part of the country to encourage children to put themselves forward to study at Cambridge – including some of whom may not have the confidence to do so. Our pursuit of talent extends to our PhD students, our research staff and to our most senior professorial positions.

Third, we value diversity – diversity, of opinion, as well as in our staff and student body, are vital to our success.

Around 60% of our postgraduate staff come from overseas and we recruit 25% of our research staff from within the EU. And without the EU’s support in creating mobility for international students and early career researchers, our contribution to the world would be severely compromised.

Finally, we are excellent at creating partnerships. We build alliances – with businesses, hospitals, local authorities, governments and other institutions.

In December last year the InnoLife Knowledge and Innovation Community, a €2.1bn project was initiated supported by the European Institute of Innovation and Technology, to address the impact of ageing populations and dependence. The scale of the project, not just its funding, is truly impressive. It brings together 144 European companies, research institutes and universities across nine EU countries, including the University of Cambridge, to tackle one of the major challenges that will affect us all. But that scale is exactly what is needed if we are to overcome society’s grand challenges. Put simply, we cannot access the talent, develop the infrastructure or provide the funding at a national level. We need to leverage expertise across multiple geographies and sectors; to develop networks, learning opportunities, new products and services.

These attributes make it clear that Universities are at the heart of efforts to create growth, economic stability and wellbeing. Equally, Europe can and should be the region to exert influence on the global stage in the interests of our nation states, institutions and especially individual citizens. It is clear that the interests of Europe and Universities are mutually aligned.

However, a perfect storm of fiscal short-sightedness, a political debate on immigration that is based on fear and emotion – in my own country at least – and a slow erosion of universities’ autonomy, threaten our future. A threat to Europe is a threat to our Universities – and vice versa. What are those threats?

Threats to universities, threats to Europe

Funding under pressure

First of all, funding. This is always a complex area with multiple priorities, but let me focus on one much publicised issue: the plan to divert €2.7bn out of the Horizon 2020 budget to a new European Fund for Strategic Investment. While I certainly agree that investment in growth and jobs is crucial at a time when the threat of EU disintegration has never been so great, but cutting the research budget is not the solution: protecting it is.

The European Fund for Strategic Investment has been created to address the challenging economic situation we find ourselves in. Yet its impact on Europe’s long-term competitiveness could be very damaging. To understand the potential ramifications, just rewind the logic of the argument that economic growth is dependent on research and innovation. Less investment, less innovation. Less innovation, fewer jobs. Fewer jobs, more hardship for people and communities. Not to mention that Europe as a region will be weakened – right at the moment when its competitors are increasing their investment in knowledge production.

We have, in Horizon 2020, an excellent, evidence-based framework that was born out of widespread consultation. It is the latest instalment in a long line of research programmes that have, until now, demonstrated a commitment by the European Commission to research and innovation. And yet, a year on from its inception, it has been weakened considerably – a victim of political expediency and false logic. Diverting money from a proven funding model – the success of which stretches back more than 30 years – makes no sense. Be in no doubt: the cuts to research and innovation will damage Europe’s economic future.

UK membership of the EU

Second, we must have a sensible debate about the UK’s contribution to, and membership of, the European Union. There are so many reasons why this is important, but let me give you some that are close to my heart: the positive effects of mutual security and cross-European mobility. My parents were victims of European conflict, captured in Eastern Poland at the outbreak of the Second World War and incarcerated in Siberia. Since the EU was formed we have avoided such previously common conflagrations, something the University of Vienna and everyone here must be glad of. After release and journeys across Asia and fighting in Italy, my parents chose to settle in the United Kingdom in 1947, as to return to their native Poland was fraught with peril. It is true to say that, without the UK’s open and positive attitude to immigrants then, I would not be standing here in front of you today.

So it equally alarms and disappoints me, to hear the manner in which immigration is discussed in the UK: in the media and across the political spectrum. It is the language of the ‘other’ – fearful, emotional and reactionary. Migration and freedom of movement have always played a revitalising role in ‘receiving’ economies. It is something that university vice-chancellors know only too well. Nearly a third of our academic workforce is made up of postdoctoral researchers. Highly mobile and ambitious, they are the engine of our research output. Make it difficult or unattractive for them to work with us, and they will take their talents elsewhere. The same is true for international students – the brightest of whom we want to stay in our countries, and in Europe, where they can make positive and long-lasting contributions.

We cannot let political short-sightedness stand in the way of our continued economic recovery. The UK’s future, as a member of the EU, cannot be decided by an intemperate, ill-defined and ill-informed debate on immigration.

Let me be clear: I believe the UK’s future lies at the heart of the EU, and that many people in the UK support that too. EU funding to individuals and institutions alone is too important to be sacrificed for short-term electoral success. Much European funding is collaborative and trans-national by nature, and the same projects could not be pursued, or the same level of impact achieved, were the UK contribution to the EU research or higher education budgets invested at a national level. In an era of globalization, research and higher education is international and externally facing. Therefore an exit from the EU would be highly damaging to the UK higher education sector.

No, the European Union is not perfect and there is always room for improvement. But without membership of the Council of the European Union or the European Parliament, the UK would lose out on the power to influence the future direction of the EU including its hugely important research, innovation and higher education directions.

For an idea of what this might look like, we need look no further than Switzerland. Following their referendum last year on immigration quotas – carried by the narrowest of margins – the country is no longer able to participate in the Erasmus student exchange programme. Despite the Swiss government and the EU bridging gaps recently, some areas of Horizon 2020 are still off-limits, meaning that the Swiss government has to put in place – and fund – transitional arrangements for those academics unable to access EU research money. This, I must remind you, in a country that was incredibly successful in attracting EU funding for research.

The current agreement takes Switzerland up to 2016. Beyond that lies uncertainty. And as all academics will tell you, two years is not a sensible timeframe to plan and conduct any significant research project

I sympathise with colleagues in Switzerland but I hope that they will understand when I say that I don’t want the UK to be in that position. A position that we are certain to be in if we sleepwalk our way through a UK withdrawal from the EU.

A UK exit from the EU would also be detrimental to our European partners. The UK has much to offer and in the University context it will damage much collaborative research. It would hit particularly ‘grand challenge’ programmes, that seek to tackle global issues such as ageing, energy, climate change to name a few. And Europe would be without one of its most influential voices and partners at a time when it needs unity of purpose and vision.

Autonomy

The third threat to universities involves the erosion of our autonomy – at both the individual and institutional level.

When you look at the contribution academic work has made to the progress of humanity, whether in the sciences, or in the arts and humanities, the importance of academic freedom is clear. I am not talking about freedom without accountability. Accountability – in various forms – is important if universities are to retain the trust they need in continuing their work as autonomous, self-governing institutions. I am talking about the freedom of thought and of fundamental, investigator-led inquiry.

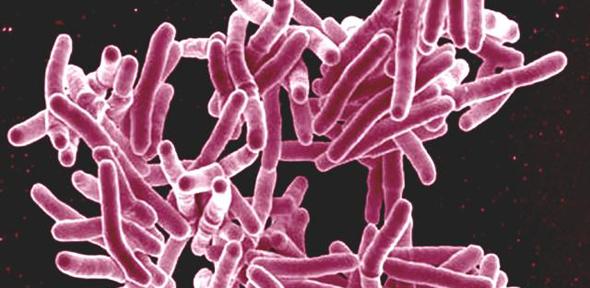

In Cambridge fundamental research led to the discovery of monoclonal antibodies in the 1970s followed by basic research to adapt them to human therapeutic use. In the past two years, two new drugs, developed at Cambridge, have received regulatory approval. The first, Alemtuzumab, is a new treatment for multiple sclerosis. The second, Lynparza, is an anti-cancer medicine.

I make two key points. The first is that those timescales don’t fit in to short-term, purely government-backed or commercial priorities – but nobody can seriously claim that the investment of time, money and trust placed in the individuals and groups involved has not contributed to society.

The second is that it is often the cumulative effect of fundamental research; the ongoing development of new knowledge and insight, which is not easily quantifiable, and does not fit in to funding cycles, or research themes, that leads to breakthroughs.

Universities create the environments where this can happen – but only if their autonomy is valued and protected. Yes, we enjoy significant levels of autonomy already. But there are many manifestations of autonomy, and many ways in which it can be compromised. Governments, funders and policymakers must listen to universities, and support them in supporting society in this most challenging and yet opportunity-filled of centuries.

Conclusion

So there are important choices to be made by all of those who can shape the future of higher education. Support universities, or put at risk the things that matter most to ordinary people. Jobs. Prosperity. Freedom. Health. Opportunities.

Put in place strong funding streams that support autonomous intellectual inquiry and grand challenge projects. These, not top-down, government-backed strategies, are the root of innovation.

Understand that universities such as Vienna and Cambridge are unique institutions, and respect their space and way of working in the 21st century. We value the past, just as we value our long-term future. We do not fit easily into election cycles, or participate willingly in reactionary politics. But we have a great track record, longevity that is the envy of many, and a clear and tested plan for success.

This is a critical time for Europe. We need to have the confidence in the excellence of our institutions, to think globally, and gain strength from the power of collective endeavour. Partnerships, whether between nations, institutions or individuals, are not easy. They require effort, commitment and compromise. But the rewards far exceed our ability to act alone.

So while we celebrate this important milestone in the University of Vienna’s illustrious history, let us also commit to making our future something the next generation can look back on in 50 years’ time and say: “The right choices were made.”