People living in the former industrial heartlands of England and Wales are more disposed to negative emotions such as anxiety and depressive moods, more impulsive and more likely to struggle with planning and self-motivation, according to a new study of almost 400,000 personality tests.

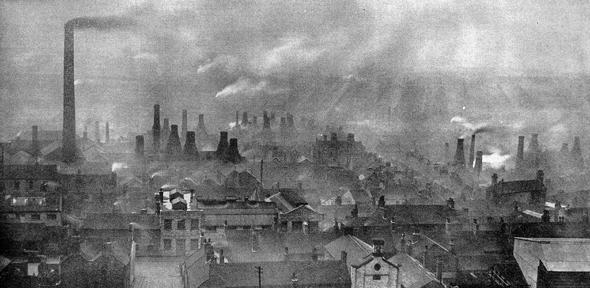

The findings show that, generations after the white heat of Industrial Revolution and decades on from the decline of deep coal mining, the populations of areas where coal-based industries dominated in the 19th century retain a “psychological adversity”.

Researchers suggest this is the inherited product of selective migrations during mass industrialisation compounded by the social effects of severe work and living conditions.

They argue that the damaging cognitive legacy of coal is “reinforced and amplified” by the more obvious economic consequences of high unemployment we see today. The study also found significantly lower life satisfaction in these areas.

The UK findings, published in the Journal of Personality and Social Psychology, are supported by a North American “robustness check”, with less detailed data from US demographics suggesting the same patterns of post-industrial personality traits.

“Regional patterns of personality and well-being may have their roots in major societal changes underway decades or centuries earlier, and the Industrial Revolution is arguably one of the most influential and formative epochs in modern history,” says co-author Dr Jason Rentfrow, from Cambridge’s Department of Psychology.

“Those who live in a post-industrial landscape still do so in the shadow of coal, internally as well as externally. This study is one of the first to show that the Industrial Revolution has a hidden psychological heritage, one that is imprinted on today’s psychological make-up of the regions of England and Wales.”

An international team of psychologists, including researchers from the Queensland University of Technology, University of Texas, University of Cambridge and the Baden-Wuerttemberg Cooperative State University, used data collected from 381,916 people across England and Wales during 2009-2011 as part of the BBC Lab’s online Big Personality Test.

The team analysed test scores by looking at the “big five” personality traits: extraversion, agreeableness, conscientiousness, neuroticism and openness. The results were further dissected by characteristics such as altruism, self-discipline and anxiety.

The data was also broken down by region and county, and compared with several other large-scale datasets including coalfield maps and a male occupation census of the early 19th century (collated through parish baptism records, where the father listed his job).

The team controlled for an extensive range of other possible influences – from competing economic factors in the 19th century and earlier, through to modern considerations of education, wealth and even climate.

However, they still found significant personality differences for those currently occupying areas where large numbers of men had been employed in coal-based industries from 1813 to 1820 – as the Industrial Revolution was peaking.

Neuroticism was, on average, 33% higher in these areas compared with the rest of the country. In the ‘big five’ model of personality, this translates as increased emotional instability, prone to feelings of worry or anger, as well as higher risk of common mental disorders such as depression and substance abuse.

In fact, in the further “sub-facet” analyses, these post-industrial areas scored 31% higher for tendencies toward both anxiety and depression.

Areas that ranked highest for neuroticism include Blaenau Gwent and Ceredigion in South Wales, and Hartlepool in England.

Conscientiousness was, on average, 26% lower in former industrial areas. In the ‘big five’ model, this manifests as more disorderly and less goal-oriented behaviours – difficulty with planning and saving money. The underlying sub-facet of ‘order’ itself was 35% lower in these areas.

The lowest three areas for conscientiousness were all in Wales (Merthyr Tydfil, Ceredigion and Gwynedd), with English areas including Nottingham and Leicester.

An assessment of life satisfaction was included in the BBC Lab questionnaire, which was an average of 29% lower in former industrial centres.

While researchers say there will be many factors behind the correlation between personality traits and historic industrialisation, they offer two likely ones: migration and socialisation (learned behaviour).

The people migrating into industrial areas were often doing so to find employment in the hope of escaping poverty and distressing situations of rural depression – those experiencing high levels of ‘psychological adversity’.

However, people that left these areas, often later on, were likely those with higher degrees of optimism and psychological resilience, say researchers.

This “selective influx and outflow” may have concentrated so-called ‘negative’ personality traits in industrial areas – traits that can be passed down generations through combinations of experience and genetics.

Migratory effects would have been exacerbated by the ‘socialisation’ of repetitive, dangerous and exhausting labour from childhood – reducing well-being and elevating stress – combined with harsh conditions of overcrowding and atrocious sanitation during the age of steam.

The study’s authors argue their findings have important implications for today’s policymakers looking at public health interventions.

“The decline of coal in areas dependent on such industries has caused persistent economic hardship – most prominently high unemployment. This is only likely to have contributed to the baseline of psychological adversity the Industrial Revolution imprinted on some populations,” says co-author Michael Stuetzer from Baden-Württemberg Cooperative State University, Germany.

“These regional personality levels may have a long history, reaching back to the foundations of our industrial world, so it seems safe to assume they will continue to shape the well-being, health, and economic trajectories of these regions.”

The team note that, while they focused on the negative psychological imprint of coal, future research could examine possible long-term positive effects in these regions born of the same adversity – such as the solidarity and civic engagement witnessed in the labour movement.

Study finds people in areas historically reliant on coal-based industries have more ‘negative’ personality traits. Psychologists suggest this cognitive die may well have been cast at the dawn of the industrial age.

The text in this work is licensed under a Creative Commons Attribution 4.0 International License. For image use please see separate credits above.

Two changes in the 20th century likely contributed further to increased glass sizes. Wine glasses started to be tailored in both shape and size for different wine varieties, both reflecting and contributing to a burgeoning market for wine appreciation, with larger glasses considered important in such appreciation. From 1990 onwards, demand for larger wine glasses by the US market was met by an increase in the size of glasses manufactured in England, where a ready market was also found.

Two changes in the 20th century likely contributed further to increased glass sizes. Wine glasses started to be tailored in both shape and size for different wine varieties, both reflecting and contributing to a burgeoning market for wine appreciation, with larger glasses considered important in such appreciation. From 1990 onwards, demand for larger wine glasses by the US market was met by an increase in the size of glasses manufactured in England, where a ready market was also found.

Jakob Seidlitz is at PhD student on the NIH Oxford-Cambridge Scholars Programme. A graduate of the University of Rochester, USA, he spends half of his time in Cambridge and half at the National Institutes of Health in the USA.

Jakob Seidlitz is at PhD student on the NIH Oxford-Cambridge Scholars Programme. A graduate of the University of Rochester, USA, he spends half of his time in Cambridge and half at the National Institutes of Health in the USA.

Dr Renske Smit is a postdoctoral researcher and Rubicon Fellow at the Kavli Institute of Cosmology and is supported by the Netherlands Organisation for Scientific Research. Prior to arriving in Cambridge in 2016, she was a postdoctoral researcher at Durham University and a PhD student at Leiden University in the Netherlands.

Dr Renske Smit is a postdoctoral researcher and Rubicon Fellow at the Kavli Institute of Cosmology and is supported by the Netherlands Organisation for Scientific Research. Prior to arriving in Cambridge in 2016, she was a postdoctoral researcher at Durham University and a PhD student at Leiden University in the Netherlands.